Problem

In scenarios of weak GNSS signals, visual inertial odometry is an attractive location solution. How can we enhance the accuracy and robustness of visual inertial odometry?

Method

Fuse stereo camera frames and inertial data with a double window optimization. Loop closure and map reuse is inherent thanks to the ORB-SLAM framework based on which the method was implemented. The term double window, coined by Hauke Strasdat, refers to two groups of factors in one optimization step. One spatial group consists of only factors of keyframes and landmarks, i.e., landmark observations, while the other temporal group consists of not only landmark observations but also factors formed by inertial data.

Key results

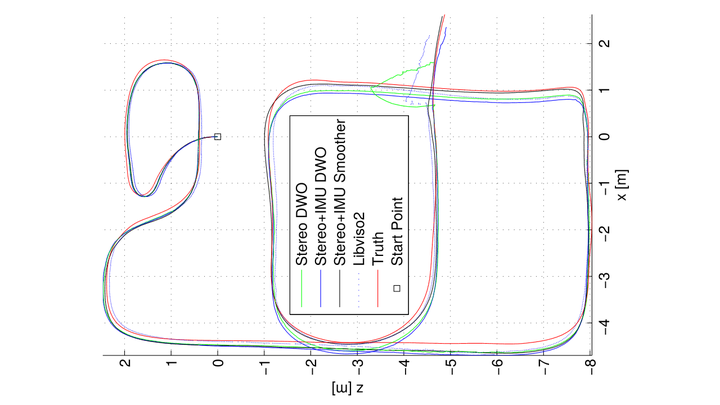

Tests on the Tsukuba Stereo and KITTI datasets verified that our approach achieved higher accuracy than the sliding window smoother, and an incremental stereo camera odometry.

One byproduct of the stereo camera-IMU fusion method is the stereo camera odometry for the IMU data is dispensable to the proposed method.